Important

Backblaze, a backup and cloud storage company, has published data comparing the long-term reliability of solid-state storage drives to traditional hard drives running in its data center. Based on data collected since the company began using SSDs as boot drives in late 2018, cloud storage evangelist Backblaze Andy Klein Published a report yesterday It turns out that the company’s SSDs fail at a much lower rate than their own hard drives as the drives age.

Backblaze has been publishing drive failure statistics (and related comments) for years now; The Hard Disk Focus Reports Monitor the behavior of tens of thousands of data volumes and boot drives across most major manufacturers. The reports are comprehensive enough that we can draw at least some conclusions about which companies make the most (and least) drives reliable.

The sample size for this SSD data is much smaller, in terms of the number and variety of drives tested – they’re mostly 2.5-inch drives from Crucial, Seagate, and Dell, with little representation from Western Digital/SanDisk and no data from Samsung drives at all. This makes the data less useful for comparing relative reliability between companies, but it can still be useful for comparing the overall reliability of hard drives with that of SSDs doing the same work.

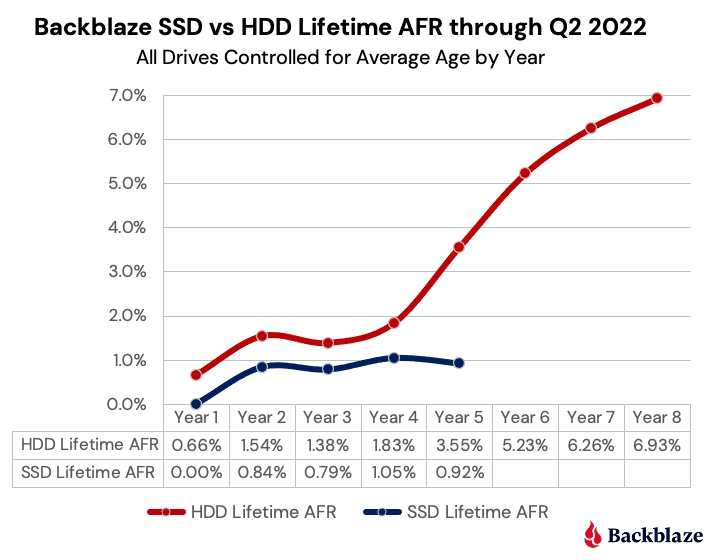

Backblaze data indicates that hard drives begin to fail more in the fifth year, while SSDs continue to roll over.

Backblaze uses SSDs as boot drives for its servers instead of storing data, and its data compares these drives to hard drives that were also used as boot drives. The company says these drives handle storing logs, temporary files, SMART stats, and other data in addition to booting — they don’t write terabytes of data every day, but they don’t just sit there doing nothing once the server has booted either.

During the first four years of service, SSDs fail at a lower rate than HDDs overall, but the curve looks the same – few failures in the first year, a jump in the second, a slight decrease in the third, another increase in year four. But once you reach year five, hard drive failure rates start to rise rapidly — jumping from a failure rate of 1.83 percent in the fourth year to 3.55 percent in the fifth. On the other hand, Backblaze’s SSDs continued to fail at a rate of about 1 percent as they did the year before.

This data—the reliability gap between them and the fact that hard drives start popping up sooner than SSDs—seems self-evident. All else being equal, you would expect an engine with a set of moving parts to have more points of failure than one with no moving parts. But it’s still interesting to see this case made from data from thousands of drives over a few years of use.

Klein speculates that SSDs “could hit the wall” and start failing at higher rates as their NAND flash chips wear out. If so, you’ll see that lower-capacity drives begin to fail at a higher rate than higher-capacity drives because a drive with more NAND has a higher write tolerance. You’ll also likely see a lot of these drives start failing around the same time since they all do a similar job. Home users who constantly create, edit, and navigate large multi-gigabyte files can see their drives wear out faster than they would in a Backblaze usage scenario.

For anyone who wants to squeeze in the raw data that Backblaze uses to generate their reports, the company makes them available for download over here.

“Freelance web ninja. Wannabe communicator. Amateur tv aficionado. Twitter practitioner. Extreme music evangelist. Internet fanatic.”